Thinking with AI Is a New Level of Human Agency in Its Own Right

Adobe Stock

Working with AI has quietly become a strategic activity — one that places real demands on the judgment of every professional who does serious thinking with it. Most professionals have not yet recognized it as strategic. They treat it as tool use, accumulating hours with the interface the way they once accumulated hours with a spreadsheet. The gap between professionals who develop the strategizing capability this activity requires and professionals who don't will compound — quietly — into a meaningful difference in the quality of their thinking and the quality of their work.

The cost of getting this wrong does not show up in normal working life. The output looks plausible. The decision proceeds. Whether the framing the professional adopted was the one their own judgment would have produced, whether the option set was complete, whether the pattern the tool surfaced was the one that mattered — none of this is tested against an alternative. The higher-level thinking that should have been done was instead quietly handed off, and there is no signal in the day's work that flags what was handed off or what it cost. Familiarity with the interface accumulates. The underlying capability does not.

Thinking with AI is a strategizing capability — a deliberate, developable capability with its own internal architecture — operating at a structurally distinct level within a larger map of strategic agency: the Five Business Big Pictures (Mitreanu, 2026a). The level is new. It is one a professional now needs to develop deliberately, because the gap is not closed by use alone. Hours logged with the interface do not, on their own, produce the capability. Deliberate practice does — practice on a defined set of strategic actions, in environments that reproduce the dynamics at a scale where the strategist can see them work.

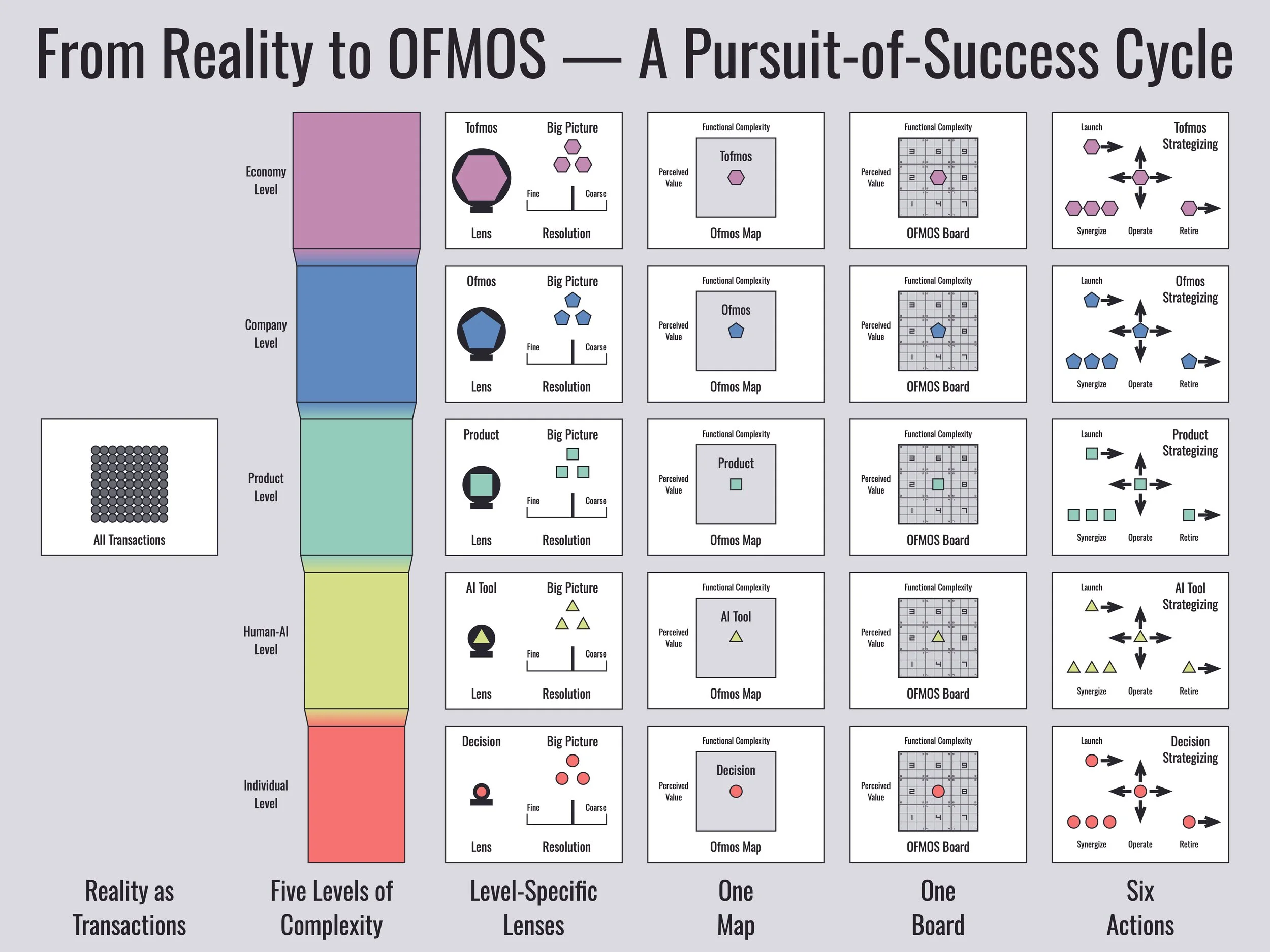

The Five Business Big Pictures identifies five levels at which an individual strategizes — five bands along a continuum of complexity, each one a stretch where a different set of dynamics governs success. At each level, the strategist wields a different instrument: the decision portfolio at the Individual Level, the AI tool portfolio at the Human-AI Level, the product portfolio at the Product Level, the ofmos portfolio at the Company Level, and the tofmos portfolio at the Economy Level. Each level has its own emergent phenomena and its own formula for success, none of which can be reduced to the levels below. The Human-AI Level sits second in the sequence, between the strategist wielding the decision portfolio alone and the strategist wielding a product portfolio in a market.

The Human-AI Level is new in one sense and old in another. The level is new because strategic thinking is happening now across a system where an AI tool participates in the thinking — not just records it, not just computes on it, but contributes to what gets thought. On the other hand, the role this level plays in the structure of human agency is older than any of its tools, not a feature of the current technological moment promoted to the status of a permanent level of agency. Writers occupied it with writing. Bookkeepers occupied it with double-entry bookkeeping. A generation of professionals occupied it with the spreadsheet.

Each tool created its own moment when people had to figure out how to think with it on purpose, and each rewarded the ones who did. The Human-AI Level is this generation's occupation of that role. The label is tied to today's technology and will change when the technology does. The role won't. The strategist who develops the capability now, with the most powerful cognitive tool yet built, is joining a lineage rather than catching a wave. The demand for it never expires.

1. Strategic thinking now spans human and tool

Throughout this post, "strategist" means every professional doing thinking work: anyone whose job involves making decisions, framing problems, or weighing options. The word names a role, not a title. Anyone working with AI tools today is, in this sense, a strategist.

When decisions are made using only the strategist's own cognition, strategic thinking is bounded by what that cognition can produce on its own: the framings the mind notices, the options it generates, the alternatives it can hold in working memory. The strategist works with what their cognition makes available and chooses among it. The cognitive system is unified by default — there is only one of it — and the strategist's task is to make that single system work as well as it can.

When decisions are made using AI tools that participate in the thinking process — tools that contribute to the framings and options the strategist works with and to how the strategist evaluates them — the boundary moves. Strategic thinking now happens across a system that includes both the human and the tools. The cognitive system is no longer unified by default; whether it functions as one system or as a collection of partially aligned components becomes a question the strategist must actively answer. What framings get adopted and which options get pursued now depend on the tool's contributions and on what the human accepts, modifies, or rejects.

That cognition can extend across human-tool systems is not a new observation. Cognitive science has been studying it for decades, under headings like distributed cognition (Hutchins, 1995) and the extended mind (Clark & Chalmers, 1998). Hutchins's analysis of a naval navigation team showed that the navigation was not done by any single mind but by a system that included people, charts, instruments, and procedures. Clark and Chalmers argued that under certain conditions, cognitive processes can be constituted by resources distributed across the brain, the body, and the environment — a notebook used as memory becomes part of the cognitive system, not just a record of it. Those literatures established that cognition can extend across human-tool systems. What they did not address is the strategic-agency dimension: what the strategist does when their thinking is so extended, what makes the extended system work well or badly, what the formula for success is. That is the dimension this post is about — and the dimension that has become urgent because of one specific feature of AI's participation: it reaches further upstream, into the generation of the candidate goals among which the strategist selects, than previous cognitive technologies reached.

What this produces is something that did not exist when the strategist worked alone: a decision-making capability that emerges from the interaction between the strategist and the tool, with the strategist's judgment doing the integrative work that holds it together. The framings and options the strategist works with depend on what the tool generates. The choices among them depend on what the strategist accepts, modifies, or rejects. The reasoning that produces the decision is shaped by an interactive sequence — the tool's contribution shapes what the strategist considers next, which shapes what the tool produces next — that neither side controls alone. The framework calls this augmented judgment, and it is the emergent phenomenon that defines the Human-AI Level of strategic agency.

Which way the augmented-judgment system leans is not predetermined. When strategic thinking spans human and tool, the strategist sits somewhere along a spectrum running from active augmentation — the strategist's judgment integrating the tool's contributions in service of higher-level goals the strategist is building and refining — toward surrender, where the strategist accepts the tool's framings as the framings, treats its choices as the choices, and takes its output as the answer. The two ends are not symmetric. Augmentation is the working configuration: judgment in charge, tool participating. Surrender is the failure mode: judgment ceded, tool effectively in charge. Most strategists sit somewhere in the middle, drifting toward surrender unless they are actively working against that drift — because the drift is the path of least cognitive effort, and the cost of taking it is not visible in the moment.

The cost of drifting toward surrender is not incidental — it follows from the role higher-level goals play in the One-Need Theory of Behavior (Mitreanu, 2026b). The theory holds that the higher-level goals are not decorative: they are the prediction mechanism that lets the individual anticipate the lower-level goals their changing circumstances will produce, and the adaptive scaffolding that lets them reconfigure when conditions change. Surrender that layer to the tool, and the individual loses both. Every change in circumstances now forces a fresh, energy-taxing round of goal aggregation-disaggregation, and the cognitive load the hierarchy normally absorbs returns in full. This is why deciding what the tool contributes to and what remains the strategist's alone is the strategist's work, not the tool's — and why doing it well is a strategizing capability rather than a matter of getting good at the interface.

Which way the judgment leans is not a property of AI. It is a property of the strategist's deliberate practice.

2. Strategizing at this level is AI tool portfolio management with intent

The instrument the strategist wields at this level is a portfolio of AI tools. Each tool occupies a position defined by two coordinates: perceived value (what the tool contributes to the strategist's pursuit of successful existence) and functional complexity (the effort to deploy it in one additional session). The strategizing capability is portfolio management with intent — the judgment of what to add, how to position and reposition each tool, and what to retire.

The formula for success at this level, like the formula at every level, has a structural requirement and a responsive component. The structural requirement is the general condition for the emergent phenomenon — augmented judgment — to persist and remain functional, regardless of the strategist's particular situation. At the Human-AI Level it has two parts: cognitive alignment (the portfolio anchored in the strategist's judgment, the tools serving higher-level thinking rather than substituting for it) and AI tool synergies (combinations in which tools work together as a system, producing something none of them produces alone). Synergies are structural, not optional, because efficiency is foundational to how intelligent systems operate (Mitreanu, 2026b). The responsive component is the strategist's situated work on that condition: which synergies to construct, which tools to add or retire, and when to deliberately accept near-term drift in either part for a higher-value move. That trade-off is part of the strategizing, not a violation of the formula.

This work sits at a different scope than the moment-to-moment use of any single tool. Within a session, the strategist still makes micro-decisions — when to lean on a tool, when to hold back, how to weave its contributions in — but those decisions are shaped by portfolio composition rather than the other way around. The strategizing capability is concerned with the portfolio as a whole, over weeks, months, and years as the strategist's work changes.

A strategist who has not developed this capability is not using AI poorly in any visible way. The questions get answered. The drafts get written. What is missing is the layer of judgment that determines whether the portfolio is augmenting the strategist's thinking or quietly shaping it.

3. The Human-AI Level sits within the larger map of strategic agency

The five levels of human agency as developed in The Five Business Big Pictures framework. The Human-AI Level sits second, between the Individual Level and the Product Level. From the blog post "From the Real World to OFMOS, and Back."

The Human-AI Level sits between the Individual Level and the Product Level.

From the Individual Level, the Human-AI Level inherits the most important thing. The strategist's judgment is what holds the system together. Augmented judgment is not a third thing made of half-strategist and half-tool. It is the strategist's judgment, operating with the tool's contributions inside it — and it remains the strategist's judgment because only the strategist has the overarching need, successful existence in their own particular circumstances, that the judgment is in service of. Cognitive alignment, one of the two parts of the structural requirement at this level, is the name for this fact made operational.

What's new is the kind of work that becomes part of strategizing. At the Individual Level the cognitive system is unified by default — there is only one of it — and there is nothing to align and nothing to combine. When AI tools enter the thinking, the system is no longer unified by default. The portfolio has to be held anchored to the strategist's judgment. The tools have to be combined into a system that produces something none of them produces alone. Work that did not exist at the Individual Level now does.

Toward the Product Level, the Human-AI Level points at something the strategist has not yet encountered. The portfolio of AI tools is composed of items outside the strategist's own cognition — items the strategist acquires, configures, and retires under the same dynamics that govern any portfolio. This is the first level where the portfolio works that way, with the strategist as both manager and end user. At the Product Level the portfolio is still composed of external items, but the user role drops out: the strategist composes offerings for customers.

4. AI participates in the construction of the strategist's thinking; prior cognitive technology did not

Cognitive technologies that augment human cognition share a structural feature. Writing recorded thoughts the writer was forming. Double-entry bookkeeping organized accounts the bookkeeper had defined. The spreadsheet executed operations on a model the user built. Each shaped how the user could think — and each operated on a representational frame the user supplied. AI tools belong to the same lineage. They break the pattern by participating in the construction of the frame itself.

The distinction is not whether the technology generates outputs. The spreadsheet generates derived values, charts, and scenario results the user did not directly enter; the calculator generates products and sums; even a thesaurus generates candidate words. What every prior cognitive technology has in common is that it operates within a frame the user has already supplied — the model the spreadsheet computes on, the operation the calculator executes, the word the thesaurus is asked to vary. The frame is the user's. The tool fills in within it. AI participates in constructing the frame. When an AI tool proposes alternatives the strategist had not generated, surfaces patterns the strategist would not have surfaced, or extends the range of framings beyond the strategist's habitual reach, it is contributing to what the frame is, not filling in within it.

In the language of the One-Need Theory of Behavior — where the strategist's hierarchy of needs is continuously constructed through the bidirectional process of disaggregation and aggregation — every prior cognitive technology operated at or before the point where the strategist had already disaggregated their need down to something matchable with the tool. AI participates in the bidirectional construction itself. A sub-goal it proposes is at once a disaggregation of the level above and a frame for the level below; a higher-level framing it surfaces is at once an aggregation of the levels beneath and a specification of what they should contain.

This is what makes the strategizing capability at this level structurally new. The cognitive technology now contributes to the construction of the strategist's thinking, and the strategist's task includes deciding which of those contributions to accept, modify, or reject — in parts of their own thinking that no external technology has previously reached. Engelbart anticipated the structure of deliberate augmentation in Augmenting Human Intellect (Engelbart, 1962), but he did not anticipate a technology that participates in constructing what the strategist is thinking about. The Five Business Big Pictures is the strategic-agency complement to Engelbart's tradition. His program described the architecture of augmentation. The framework describes what the strategist must do, deliberately, when augmentation reaches into the construction of the strategist's thinking itself.

5. The Human-AI Level is a real level of strategic agency

The Five Business Big Pictures rests on a specific intellectual foundation. Three principles from complexity science establish what counts as a real level of organization in a hierarchical system. Anderson's account of emergence (Anderson, 1972) holds that at each level of such a system, new properties appear that cannot be reduced to the level below. Simon's account of hierarchical modularity (Simon, 1962) holds that complex systems organize as nested modules with strong internal coupling and weaker coupling to other modules. Bar-Yam's complexity profile (Bar-Yam, 1997) holds that systems exhibit qualitatively distinct behavior at different scales of observation, and that real levels of organization are the scales at which those qualitative shifts occur.

The Human-AI Level satisfies all three. The emergent property is augmented judgment — a decision-making capability that arises from the interaction of the strategist's hierarchy of needs and the tools' contributions and is reducible to neither alone. The strategist alone has the overarching need and the judgment that organizes thinking around it, but lacks what the tools contribute. The tools alone produce contributions but lack the integrating judgment that organizes them around an overarching need. Augmented judgment is a property of the system that includes both. The module is the AI tool portfolio. The tools are tightly coupled to each other in their service to the strategist's thinking and more weakly coupled both to the strategist's cognition and to the broader environment in which the strategist works. The boundary between the portfolio and the strategist's cognition is the strategic variable the formula for success operates on. The qualitative shift is the cognitive technology now participating in the construction of the strategist's thinking, not just in operations within frames the strategist has already supplied. That participation is the kind of qualitative shift Bar-Yam's complexity profile identifies as marking a distinct level of organization.

The level is real in the same way the Individual Level and the Product Level are real. Each produces phenomena the level below does not, each has its own dominant dynamics, and each requires its own strategizing capability. Reading the Human-AI Level as an extension of the Individual Level — the strategist's cognition with a new accessory — misses what the level is. Reading it as a niche between the Individual and Product Levels — a transitional zone that will collapse once AI becomes ordinary — misses what makes it durable. The Human-AI Level is a level in the structural sense the complexity-science foundation defines, and it has been one for as long as cognitive technologies have participated in the construction of human thinking. AI is the current and most powerful instance.

6. The strategizing capability outlasts the technology that defines it today

The framework treats its levels as anchor points along a continuum of complexity that the observer identifies, not as fixed features built into the strategic landscape. The levels are real — the dynamics at each are genuine — but the qualitative shifts that characterize them can fade as the technology, practice, or domain that defined them becomes embedded into normal cognitive life. The spreadsheet, when it was new, was a cognitive technology that required real strategic attention. Financial analysts and managers had to think carefully about how to wield it, what to delegate to it, when to trust its output. Today, working with spreadsheets at the basic level is so embedded in professional work that it no longer reads as strategizing, even though advanced financial modeling still does. The technology has been absorbed into the background of Individual-Level work for most uses.

The same thing may eventually happen with AI augmentation. If managing AI tools becomes so deeply embedded in cognition that it stops registering as a distinct challenge — the way running an internet presence was a CEO-level concern in the late 1990s and is now assumed background — the Human-AI Level would be absorbed back into the Individual Level. That outcome would confirm the framework's logic, not contradict it. A level is real when the qualitative shift that defines it is observable; when the shift fades, the level fades with it.

What survives any such absorption is the strategizing capability itself. The technology turns over; the capability remains. Through all of it, the capability is the same: wielding cognitive technologies deliberately, so that one's thinking is augmented rather than replaced. The label "Human-AI" anchors the level to the current moment. The role it names — strategizing across a system in which a cognitive technology participates in the strategist's thinking rather than only recording or executing on it — is older than the label and will outlast it, just as it carried across writing, double-entry bookkeeping, and the spreadsheet, each of which defined the role for its own generation before yielding to the next.

7. AI tool portfolio management develops only through deliberate practice

The strategizing capability at the Human-AI Level is not built by accumulated hours alone. More than three decades of research on expert performance shows that expertise comes from deliberate practice — focused work on the specific subskills that distinguish high performers, with feedback that allows misjudgments to be identified and corrected (Ericsson, Krampe, & Tesch-Römer, 1993). Time spent with a tool produces familiarity with the tool. Deliberate practice produces the capability. The two are not the same.

At the Human-AI Level, the cost of ceded judgment doesn't surface in normal working contexts in a way the strategist can learn from. Familiarity with the interface accumulates; the capability does not. The strategist needs an environment that makes the cost surface — quickly, repeatedly, in ways they can act on.

The capability develops fastest in environments designed to make ceded judgment visible — environments that compress the feedback loop between a strategic move and its consequence, surface the cost of misjudgment quickly, and let the strategist try again, differently, immediately. Simulations are well-suited to this requirement: they isolate the dynamics from real-world noise, compress time scales, and let the strategist re-run scenarios without real-world cost. What makes any simulation effective is fidelity to how the dynamics actually work — the patterns that arise during practice need to be the patterns the strategist will face in working life, not metaphors for them.

A parallel observation comes from the Augmented Cognition research program, a 25-year-old applied field that has studied how computational technology can extend human cognitive performance, primarily at the operational and tactical levels (Schmorrow et al., 2009; Stanney et al., 2009). The strategic level, as a recent taxonomy paper from the field notes, is "practically unexplored," with management and strategic cognition specifically named as fields that would benefit from cognitive augmentation tools (Pinello et al., 2023). The Human-AI Level of strategic agency is the framework's contribution to that gap, and the practice path it points to is deliberate practice on the strategic actions that constitute AI tool portfolio management.

8. Six actions shape the AI tool portfolio

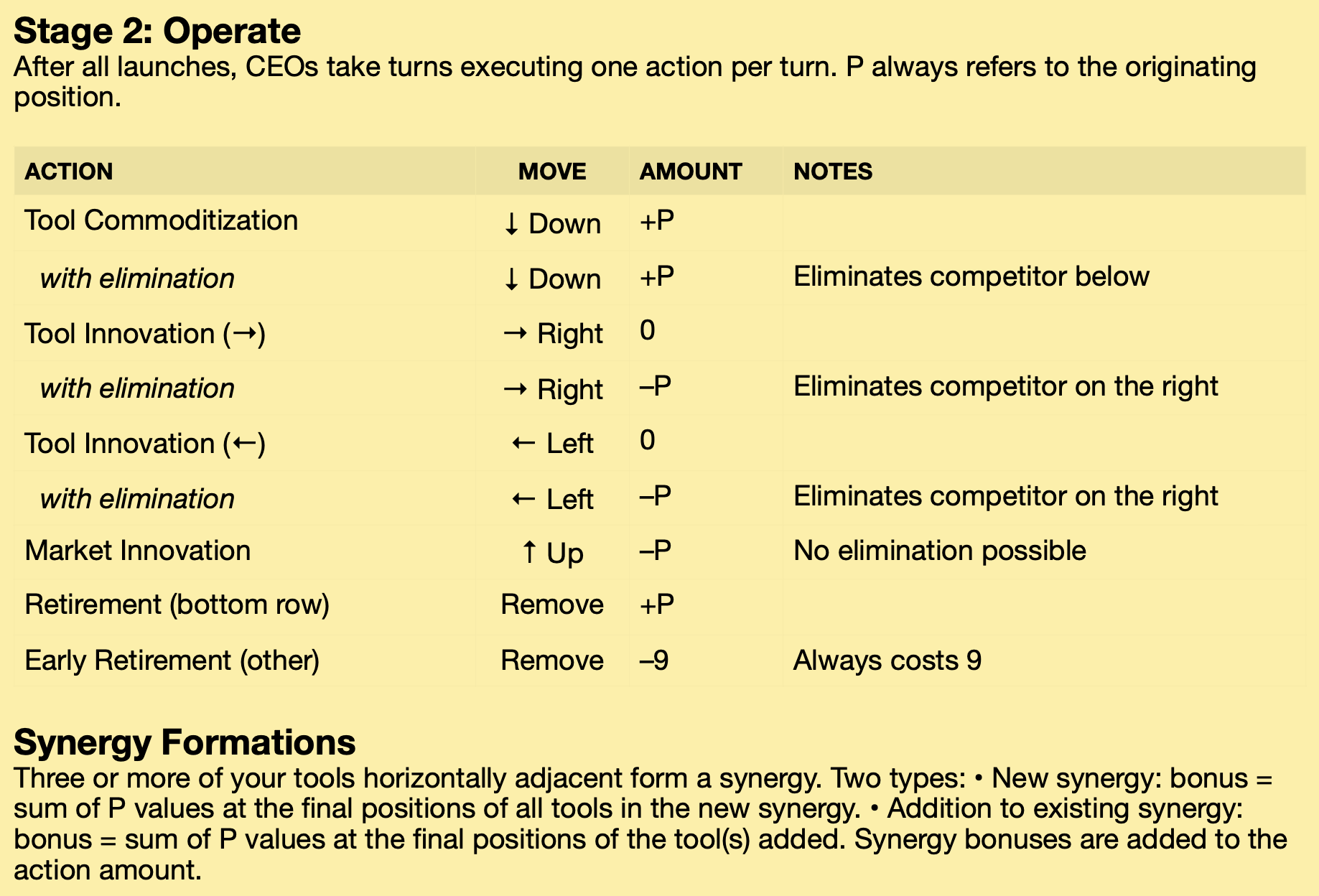

The framework's six strategic actions, translated into AI tool portfolio management at the Human-AI Level. From the Strategy Learning Guide for the Human-AI Level with OFMOS® Essential (Pilot Edition), April 2026.

The strategizing capability at the Human-AI Level operates through six actions. Six because they exhaust the moves available to the strategist on the Ofmos Map — the framework's two-dimensional landscape, defined by perceived value (vertical) and functional complexity (horizontal). Two of the six put a tool into the portfolio or take it out. The other four move the tool on the map — vertically toward higher or lower perceived value, horizontally toward greater or lesser functional complexity. Nothing the strategist does on the portfolio falls outside this set.

At the Human-AI Level, the unit of management is the AI tool. The six actions, in an order that combines lifecycle position and innovation effort, are: launch — adopting a new tool into the portfolio; commoditize — accepting the perceived-value drop that operation produces with use, rather than fighting it through innovation; innovate by decreasing the tool's functional complexity — stripping the tool back to its core function when over-elaboration no longer earns its cost; innovate by increasing the tool's functional complexity — activating advanced capabilities, building custom workflows, or integrating the tool more tightly with the rest of the portfolio; innovate by repositioning the tool to a higher-value need — putting it to work on a goal closer to what matters most in the strategist's pursuit; and retire — removing the tool from the portfolio entirely.

These six actions are how the strategist works on the portfolio. Through them, the strategist serves the formula for success — the structural requirement (cognitive alignment and AI tool synergies, both of which the portfolio must sustain for augmented judgment to remain functional) and the responsive component (situated choices about which synergies to construct, which tools to add or retire, when to accept near-term drift in service of a higher-value move). Every action serves one or both ingredients. A launch may construct a new synergy or restore alignment that had been lost. A retirement may end a misaligned use or close a synergy that no longer earns its place. The actions don't map one-to-one to the ingredients of the formula; they're the moves through which the strategist holds both ingredients together over time.

The six actions cover everything the strategist can do on the portfolio. Nothing the strategist does at this level falls outside them. Judgment at the Human-AI Level is the judgment of when to take which action, on which tool. That judgment is the capability the strategist takes with them into whatever comes after AI.

9. The strategizing capability at the Human-AI Level outlasts the technologies that demand it

The capability the strategist develops at this level is not narrowly tied to AI. It is the more general capability of wielding a cognitive technology deliberately — of deciding what the technology contributes to and what remains the strategist's alone, of using the technology without being used by it. AI augmentation is the current and most powerful expression of that capability. The next dominant cognitive technology will demand it again, in a form none of us can fully picture yet.

Strategists who develop the capability now, through deliberate practice on the work AI tools require, are not just getting better at thinking with AI. They are building the underlying capability that has rewarded its practitioners across every generation of cognitive technology — from writing, to double-entry bookkeeping, to the spreadsheet, to whatever comes after AI. The capability compounds. The technology turns over.

The gap between strategists who develop this capability and strategists who treat AI as something to pick up by use is real, and it widens with time. Every day, work goes through the same augmented-judgment system. Some strategists are shaping that system deliberately. Most are not. The difference doesn't show up in the day's output, which is why the gap is invisible while it forms — and why the strategist who starts the practice now is the one entering this older role at the moment its newest expression is being shaped.

References

Anderson, P.W. (1972). "More Is Different." Science, 177(4047), 393–396.

Bar-Yam, Y. (1997). Dynamics of Complex Systems. Addison-Wesley.

Clark, A. & Chalmers, D. (1998). "The Extended Mind." Analysis, 58(1), 7–19.

Engelbart, D.C. (1962). Augmenting Human Intellect: A Conceptual Framework. SRI Summary Report AFOSR-3223. Stanford Research Institute.

Ericsson, K.A., Krampe, R.T. & Tesch-Römer, C. (1993). "The Role of Deliberate Practice in the Acquisition of Expert Performance." Psychological Review, 100(3), 363–406.

Hutchins, E. (1995). Cognition in the Wild. MIT Press.

Mitreanu, C. (2026a). The Five Business Big Pictures. Ofmos Universe. Available at ofmos.com/the-strategy-framework.

Mitreanu, C. (2026b). The One-Need Theory of Behavior and the Ofmos Theory of Business. Ofmos Universe. Available at ofmos.com/the-foundational-theories.

Pinello, C., et al. (2023). "Augmented Cognition Compass: A Taxonomy of Cognitive Augmentations." In HCI International 2023, Springer.

Schmorrow, D.D., Stanney, K.M., Wilson, G., & Young, P. (2009). "Augmented Cognition in Human-System Interaction." In G. Salvendy (ed.), Handbook of Human Factors and Ergonomics, 3rd edn., John Wiley.

Simon, H.A. (1962). "The Architecture of Complexity." Proceedings of the American Philosophical Society, 106(6), 467–482.

Stanney, K.M., Schmorrow, D.D., Johnston, M., Fuchs, S., Jones, D., Hale, K.S., Nicholson, D., Biers, D.W., & Young, P. (2009). "Augmented Cognition: An Overview." Reviews of Human Factors and Ergonomics, 5(1), 195–224.